Using SQS to Throttle Writes to DynamoDB

Hi! I'm Guille Ojeda, Cloud Architecture Consultant To put it simply, I create simple solutions for complex technical problems.

My focus is on designing scalable, secure, and cost-effective cloud solutions that startups, scaleups and SMBs can lean on to grow.

I lean on years of experience in cloud architecture and consulting to deliver solutions that are easy to understand and manage.

We're running an e-commerce platform, where people publish products and other people purchase those products. Our backend has some highly scalable microservices running on well-designed Lambdas, and there's a lot of caching involved. Our order processing microservice writes to a DynamoDB table we set up following How to Design a DynamoDB Database. We're using DynamoDB provisioned capacity mode with auto scaling. We did a great job and everything runs smoothly.

Suddenly, someone's product goes viral, and a lot of people rush in to buy it at the same time. Our cache and CDN don't even blink at the traffic, our well-designed Lambdas scale amazingly fast, but our DynamoDB table is suddenly bombarded with writes and the auto scaling can't keep up. Our order processing Lambda receives ProvisionedThroughputExceededException, and when it retries it just makes everything worse. Things crash. Sales are lost. We eventually recover, but those customers are gone. How do we make sure it doesn't happen again?

Option 1 is to change the DynamoDB table to On-demand, which can keep up with Lambda when scaling, but it's over 5x more expensive. Option 2 is to make sure the table's write capacity isn't exceeded. Let's explore option 2.

AWS Services involved:

DynamoDB: Our database. All you need to know for this post is how DynamoDB scales.

SQS: A fully managed message queuing service that enables you to decouple components. Producers like our order processing microservice post to the queue, the queue stores these messages until they're read, consumers read from the queue in their own time.

SES: An email platform, more similar to services like MailChimp than to an AWS service. If you're already on AWS and you just need to send emails programmatically, it's easy to set up. If you're not on AWS, need more control, or need to send so many emails that price is a factor, you'll need to do some research. For this post, SES is good enough.

What is Amazon SQS

SQS is a fully managed message queuing service. A messaging queue is a data structure where items can be read in the same order as they were written: First-In, First-Out (FIFO).

Queues allow us to decouple components by making the consumer (that's the component that reads from the queue) unaware of who wrote the item (the writer is called producer). Additionally, in software architecture we usually focus on another characteristic of queues: The reading of the item can happen some time after the writing. This lets us decouple producers and consumers in time: Consumers don't need to be available when Producers write to the queue. The queue stores the messages for a certain amount of time, and when Consumers are ready, they poll the queue for messages, and receive the oldest message.

For our solution, we're going to use a queue so that our order processing microservice can send a message with the order, the queue stores the message, and a consumer can read it at its own rhythm (i.e. at our DynamoDB table's rhythm).

Types of SQS Queues

There's two types of queues in SQS:

Standard queues are the default type of queue. They're cheaper than FIFO queues and nearly-infinitely scalable. The tradeoff is that they only guarantee at-least-once delivery (meaning you might get duplicates), and order of the messages is mostly respected but not guaranteed.

FIFO queues are more expensive than Standard queues, and they don't scale infinitely, but they guarantee ordered, exactly-once delivery. You need to set the

MessageGroupIdproperty in the message, since FIFO queues only deliver the next message in a MessageGroup after the previous message has been successfully processed. For example, if you set the value ofMessageGroupIdto the customer ID and a customer makes two orders at the same time, the second one to come in won't be processed until the first one is finished processing. It's also important to setMessageDeduplicationId, to ensure that if the message gets duplicated upstream, it will be deduplicated at the queue. A FIFO queue will only keep one message per unique value of MessageDeduplicationId.

Most people who think of queues are thinking guaranteed FIFO order and exactly-once delivery. The only way to actually get those guarantees is with FIFO queues.

How to Implement an SQS Queue for DynamoDB

Follow these step by step instructions to implement an SQS Queue to throttle writes to a DynamoDB table. Replace YOUR_ACCOUNT_ID and YOUR_REGION with the appropriate values for your account and region.

Create the Orders Queue

Go to the SQS console.

Click "Create queue"

Choose the "FIFO" queue type (not the default Standard)

In the "Queue name" field enter "OrdersQueue"

Leave the rest as default

Click on "Create queue"

Update the Orders service to write to the SQS queue

We need to update the code of the Orders service so that it sends the new Order to the Orders Queue, instead of writing to the Orders table. This is what the code looks like:

const AWS = require('aws-sdk');

const sqs = new AWS.SQS();

const queueUrl = 'https://sqs.YOUR_REGION.amazonaws.com/YOUR_ACCOUNT_ID/OrdersQueue';

async function processOrder(order) {

const params = {

MessageBody: JSON.stringify(order),

QueueUrl: queueUrl,

MessageGroupId: order.customerId,

MessageDeduplicationId: order.id

};

try {

const result = await sqs.sendMessage(params).promise();

console.log('Order sent to SQS:', result.MessageId);

} catch (error) {

console.error('Error sending order to SQS:', error);

}

}

Also, add this policy to the IAM Role of the function, so it can access SQS. Don't forget to delete the permissions to access DynamoDB!

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": "sqs:SendMessage",

"Resource": "arn:aws:sqs:YOUR_REGION:YOUR_ACCOUNT_ID:OrdersQueue"

}

]

}

Stop copying cloud solutions, start understanding them. Join over 45,000 devs, tech leads, and experts learning how to architect cloud solutions, not pass exams, with the Simple AWS newsletter.

Set up SES to notify the customer via email

Open the SES console

Click on "Domains" in the left navigation pane

Click "Verify a new domain"

Follow the on-screen instructions to add the required DNS records for your domain.

Alternatively, click on "Email Addresses" and then click the "Verify a new email address" button. Enter the email address you want to verify and click "Verify This Email Address". Check your inbox and click the link.

Set up the Order Processing service

Go to the Lambda console and create a new Lambda function. Add the following code:

const AWS = require('aws-sdk');

const dynamoDB = new AWS.DynamoDB.DocumentClient();

const ses = new AWS.SES();

exports.handler = async (event) => {

for (const record of event.Records) {

const order = JSON.parse(record.body);

await saveOrderToDynamoDB(order);

await sendEmailNotification(order);

}

};

async function saveOrderToDynamoDB(order) {

const params = {

TableName: 'orders',

Item: order

};

try {

await dynamoDB.put(params).promise();

console.log(`Order saved: ${order.orderId}`);

} catch (error) {

console.error(`Error saving order: ${order.orderId}`, error);

}

}

async function sendEmailNotification(order) {

const emailParams = {

Source: 'you@simpleaws.dev',

Destination: {

ToAddresses: [order.customerEmail]

},

Message: {

Subject: {

Data: 'Your order is ready'

},

Body: {

Text: {

Data: `Thank you for your order, ${order.customerName}! Your order #${order.orderId} is now ready.`

}

}

}

};

try {

await ses.sendEmail(emailParams).promise();

console.log(`Email sent: ${order.orderId}`);

} catch (error) {

console.error(`Error sending email for order: ${order.orderId}`, error);

}

}

Also, add the following IAM Policy to the IAM Role of the function, so it can be triggered by SQS and access DynamoDB and SES:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"sqs:ReceiveMessage",

"sqs:DeleteMessage",

"sqs:GetQueueAttributes"

],

"Resource": "arn:aws:sqs:YOUR_REGION:YOUR_ACCOUNT_ID:OrdersQueue"

},

{

"Effect": "Allow",

"Action": [

"dynamodb:PutItem",

"dynamodb:UpdateItem",

"dynamodb:DeleteItem"

],

"Resource": "arn:aws:dynamodb:YOUR_REGION:YOUR_ACCOUNT_ID:table/Orders"

},

{

"Effect": "Allow",

"Action": "ses:SendEmail",

"Resource": "*"

}

]

}

Make the Orders Queue trigger the Order Processing service

In the Lambda console, go to the Order Processing lambda

In the "Function overview" section, click "Add trigger"

Click "Select a trigger" and choose "SQS"

Select the Orders Queue

Set Batch size to 1

Make sure that the "Enable trigger" checkbox is checked

Click "Add"

Limit concurrent executions of the Order Processing Lambda

In the Lambda console, go to the Order Processing lambda

Scroll down to the "Concurrency" section

Click "Edit"

In the "Provisioned Concurrency" section, set "Reserved Concurrency" to 10

Click "Save"

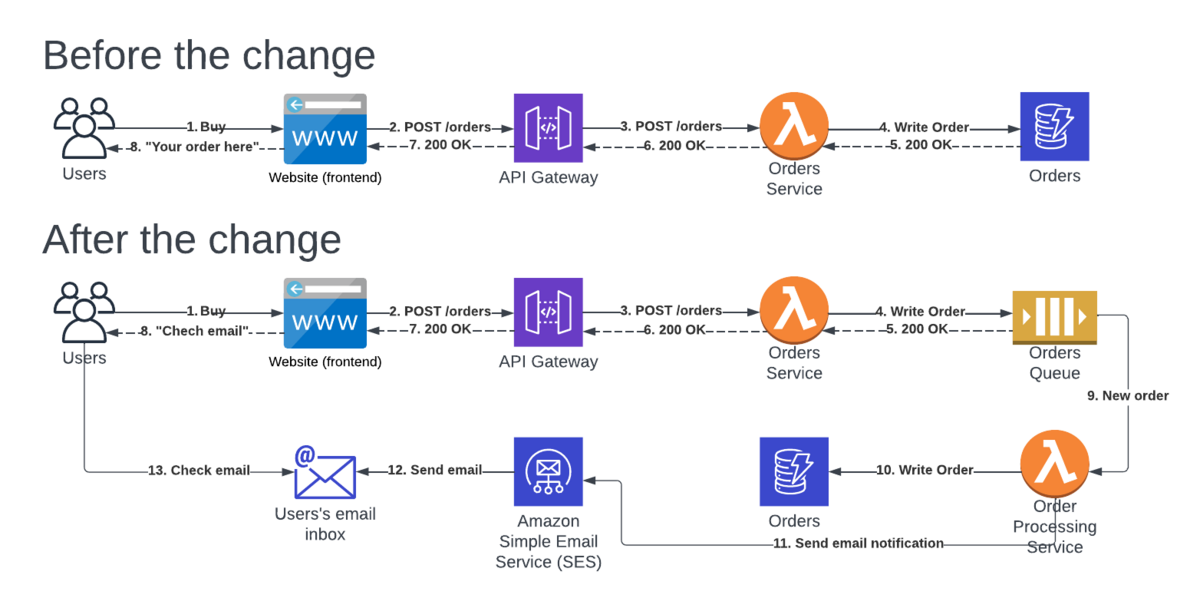

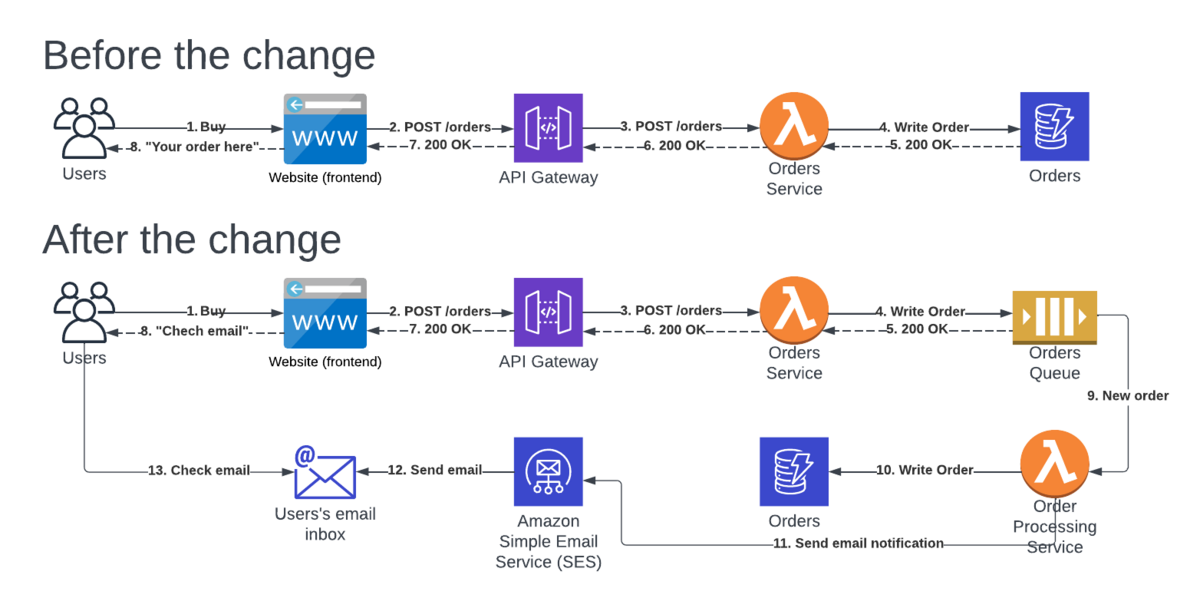

Synchronous and Asynchronous Workflows with SQS

Architecture-wise, there's one big change in our solution: We've made our workflow async! Let me bring the diagram here.

Before, our Orders service would return the result of the order. From the user's perspective, they wait until the order is processed, and they see the result on the website. From the system's perspective, we're constrained to either succeed or fail processing the order in the timeout limit of API Gateway (29 seconds). In more practical terms, we're limited by what the user is expecting: we can't just show a "loading" icon for 29 seconds!

After the change, the website just shows something like "We're processing your order, we'll email you when it's ready". That sets a different expectation to the user. That's important for the system, because now we could actually have our Lambda function take 15 minutes, without hitting the 29 seconds limit of API Gateway, or without the user getting angry. It's not just that though, if the Order Processing lambda crashes mid-execution, the SQS queue will make the order available again as a message after the visibility timeout expires, and the Lambda service will invoke our function again with the same order. When the maxReceiveCount limit is reached, the order can be sent to another queue called Dead Letters Queue (DLQ), where we can store failed orders for future reference. We didn't set up a DLQ here, but it's easy enough, and for small and medium-sized systems you can easily set up SNS to send you an email and resolve the issue manually, since the volume shouldn't be particularly large.

Once the order went through all the steps, failed some, retried, succeeded, etc, then we notify the user that their order is "ready". This can look different for different systems, some are just a "we got the money", some ship physical products, some onboard the user to a complex SaaS. For this solution I chose to do it via email because it's easy and common enough, but you could use a webhook and still keep the process async.

Best Practices for SQS and DynamoDB

Operational Excellence

Monitor and set alarms: You know how to monitor Lambdas. You can monitor SQS queues as well! An interesting alarm to set here would be number of orders in the queue, so our customers don't wait too long for their orders to be processed.

Handle errors and retries: Be ready for anything to fail, and architect accordingly. Set up a DLQ, set up notifications (to you and to the user) for when things fail, and above all don't lose/corrupt data.

Set up tracing: We're complicating things a bit (hopefully for a good reason). We can gain better visibility into that complexity by setting up X-Ray.

Security

Check "Enable server-side encryption": That's all you need to do for an SQS queue to be encrypted at rest: check that box, and pick a KMS key. SQS communicates over HTTPS, so you already have encryption in transit.

Tighten permissions: The IAM policies in this issue are pretty restrictive. But there's always a nut to tighten, so keep your eyes open.

Reliability

Set up maxReceiveCount and a DQL: With a FIFO queue, the next message won't be available for processing until the previous one is either processed successfully or dropped (to the DLQ if you set one) after maxReceiveCount attempts. If you don't set these, one corrupted order will block your whole system.

Set visibility timeout: This is the time that SQS waits without receiving the "success" response, before assuming the message wasn't processed successfully and making it available again for the next consumer. Set a reasonable value, and set the same value as a timeout for your consumer (Order Processing lambda in this case).

Performance Efficiency

Optimize Lambda function memory: More memory means more money. But it also means faster processing. Going from 30 to 25 seconds won't matter much for a successfully processed order, but if orders are retried 5 times, now it's 25 seconds we're gaining instead of 5. Could be worth it, depending on your customers' expectations.

Use Batch processing: As discussed earlier, you should consider processing messages in batches.

Remember the 20 advanced tips for Lambda.

Cost Optimization

Provisioned vs. On-demand for DynamoDB: Remember that this could be fixed by using our DynamoDB table in On-demand mode. It's 5x more expensive though. Same goes for relational databases (if we use Aurora, then Aurora Serverless is an option).

Consider something other than Lambda: In this case, we're trying to get all orders processed relatively fast. If the processing can wait a bit more, an auto scaling group that scales based on the number of messages in the SQS queue can work wonders, for a lot less money.

Stop copying cloud solutions, start understanding them. Join over 45,000 devs, tech leads, and experts learning how to architect cloud solutions, not pass exams, with the Simple AWS newsletter.

Real scenarios and solutions

The why behind the solutions

Best practices to improve them

If you'd like to know more about me, you can find me on LinkedIn or at www.guilleojeda.com